Execute dbt teradata transformation jobs in Apache Airflow using Astronomer Cosmos library

Overview

This tutorial demonstrates how to install Apache Airflow on a local machine, configure the workflow to use dbt teradata to run dbt transformations using the astronomer cosmos library, and run it against a Teradata Vantage database. Apache Airflow is a task scheduling tool that is typically used to build data pipelines to process and load data. Astronomer cosmos library simplifies orchestrating dbt data transformations in Apache Airflow. Using Cosmos, allows running dbt Core projects as Apache Airflow DAGs and Task Groups with a few lines of code. In this example, we will explain how to use astronomer cosmos to run dbt transformations in airflow against Teradata Vantage database.

Use The Windows Subsystem for Linux (WSL) on Windows to try this quickstart example.

Prerequisites

- Access to a Teradata Vantage instance, version 17.10 or higher.

If you need a test instance of Vantage, you can provision one for free at https://clearscape.teradata.com

- Python 3.8, 3.9, 3.10 or 3.11 and python3-env, python3-pip installed.

- Linux

- WSL

- macOS

Run in Powershell:

Refer Installation Guide if you face any issues.

Install Apache Airflow and Astronomer Cosmos

-

Create a new python environment to manage airflow and its dependencies. Activate the environment:

NoteThis will install Apache Airflow as well.

-

Install the Apache Airflow Teradata provider

-

Set the AIRFLOW_HOME environment variable.

Install dbt

- Create a new python environment to manage dbt and its dependencies. Activate the environment:

- Install

dbt-teradataanddbt-coremodules:

Setup dbt project

-

Clone the jaffle_shop repository and cd into the project directory:

-

Make a new folder, dbt, inside $AIRFLOW_HOME/dags folder. Then, copy/paste jaffle_shop dbt project into $AIRFLOW_HOME/dags/dbt directory

Configure Apache Airflow

- Switch to virtual environment where Apache Airflow was installed at Install Apache Airflow and Astronomer Cosmos

- Configure the listed environment variables to activate the test connection button, preventing the loading of sample DAGs and default connections in Airflow UI.

- Define the path of jaffle_shop project as an environment variable

dbt_project_home_dir. - Define the path to the virtual environment where dbt-teradata was installed as an environment variable

dbt_venv_dir.NoteYou might need to change

/../../to the specific path where thedbt_envvirtual environment is located.

Start Apache Airflow web server

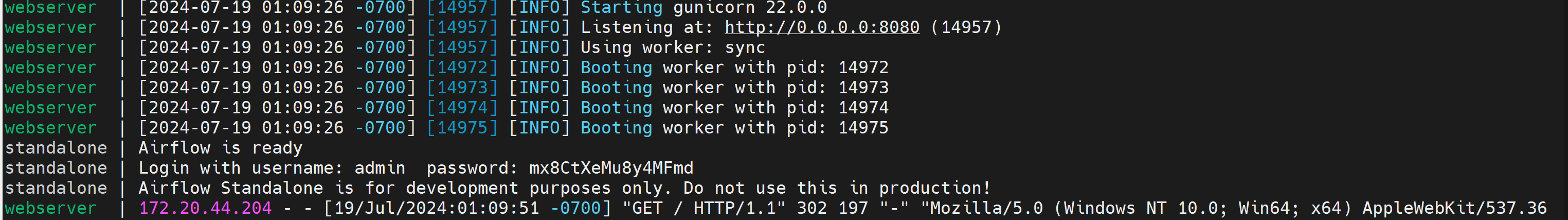

- Run airflow web server

- Access the airflow UI. Visit https://localhost:8080 in the browser and log in with the admin account details shown in the terminal.

Define Apache Airflow connection to Vantage

- Click on Admin - Connections

- Click on + to define new connection to Teradata Vantage instance.

- Define new connection with id

teradata_defaultwith Teradata Vantage instance details.- Connection Id: teradata_default

- Connection Type: Teradata

- Database Server URL (required): Teradata Vantage instance hostname to connect to.

- Database: jaffle_shop

- Login (required): database user

- Password (required): database user password

Define DAG in Apache Airflow

Dags in airflow are defined as python files. The dag below runs the dbt transformations defined in the jaffle_shop dbt project on a Teradata Vantage system using cosmos.Copy the python code below and save it as airflow-cosmos-dbt-teradata-integration.py under the directory $AIRFLOW_HOME/dags.

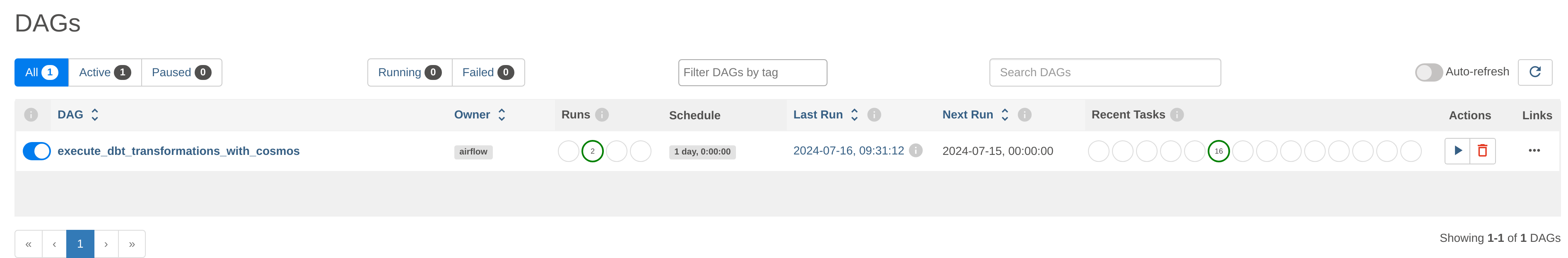

Load DAG

When the dag file is copied to $AIRFLOW_HOME/dags, Apache Airflow displays the dag in UI under DAGs section. It will take 2 to 3 minutes to load DAG in Apache Airflow UI.

Run DAG

Run the dag as shown in the image below.

Summary

In this quick start guide, we explored how to utilize Astronomer Cosmos library in Apache Airflow to execute dbt transformations against a Teradata Vantage instance.